There might be a business use case where, say, you will receive a set of data files from your partner and/or clients who would drop those files on a regular basis in a specific storage location, such as a cloud storage platform, or an FTP/SFTP site. And by data files, it could be in any supported format such as Excel files, flat files (.CSV format), Avro, Parquet, etc. The expectation is that you would need to develop an automatic ETL process to pick up those files on a regular basis as well, and then load each of the file's content to a database table. There are certain hurdles in accomplishing this, the main being that you may not know all the file names beforehand within the ETL process, so we need to develop a strategy that can loop through the folder and have each file in the folder processed individually. In this blog post, we will show you how to design an SSIS ETL process that would pick up all the Excel files from a particular cloud storage location, and process each of them by loading the data into a database table. To achieve this, we will use a few components from our SSIS Productivity Pack product, which will make the development significantly easier. Here, we are making a case that we work with files from an Azure Blob container, but the same idea can be applied to an FTP/SFTP site, or any other cloud storage platform supported by the SSIS Productivity Pack or our SSIS Integration Toolkit, such Google Drive, Dropbox, Box.com, OneDrive, SharePoint, Google Cloud Storage, etc. Let's get started.

Setup

As we just mentioned, we will be working with an Azure Blob container as our storage location for our business use case. We have a few external processes that would drop certain Excel files every day into the Azure Blob container folder path on a regular basis. And the files would be in the below format:

Filename-ddmmyyyy.xlsx

Here, "Filename" would be any file name depending on which vendor or process dumped it in the location, and ddmmyyyy would be the current date. Now, our task would be to pick up all those Excel files, and then read them one by one, and write the Excel data into the same table. Please note that this example deals with Excel files, but the same applies to flat files too. We assume that all the files would have the same metadata, as these are targeting the same destination table in a SQL Server database. We would be using the components shown below for this purpose:

- Premium File System Source component

- Azure Blob Storage Connection manager

- Premium Excel Source component

- Premium ADO.NET Destination component

- Recordset Destination component

- SSIS Foreach loop container

Design

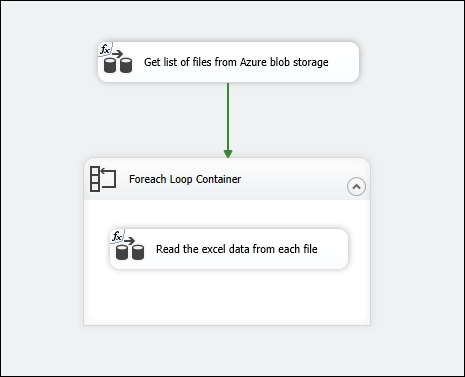

The package design, as a whole, would look like something below.

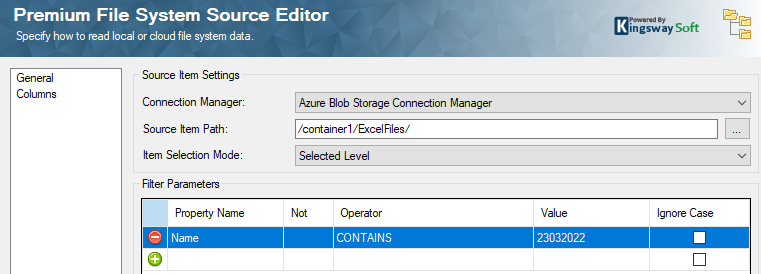

The first Data flow task - "Get list of files from Azure Blob storage" - will get the files from Azure Blob storage container folder path. Inside this task, the premium File System Source component is connected to the Azure Blob Storage location, and it gets the files from a "Selected level" by using a filter on the Current date in a required format. Please find our Online Help Manual link here to get details on how to set up the Azure Blob Storage connection manager.

For this, we first create a string variable, @[User::CurrDate], and set up the variable with an expression in the package using the expression editor.

RIGHT("0" + (DT_WSTR, 2) DAY(GETDATE()), 2)+ RIGHT("0" + (DT_WSTR, 2) MONTH(GETDATE()), 2)+(DT_WSTR, 4)YEAR( GETDATE() )

The above will give the current date in the format ddmmyyyy, which would then be used in the Premium File System Source component filter by using a "Contains" operator on the Name property (since the file name contains this value).

Please note that the Filter parameters property is parameterized, and therefore it is dynamic. The parameterization of the filter property can be done by following the steps mentioned in this blog post. For example, the expression would look like this:

"[{\"Name\":\"Name\",\"Operator\":\"CONTAINS\",\"Value\":\""+ @[User::CurrDate] +"\"}]"

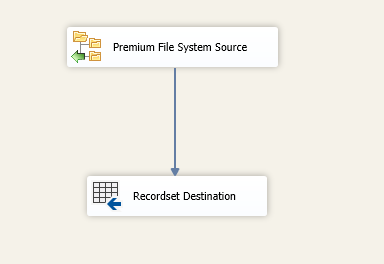

Once you have this ready, you could attach the Premium File System Source component to a Recordset Destination component. This component is used to store the records (filenames) that we receive from the source in an object variable @[User::readfiles].

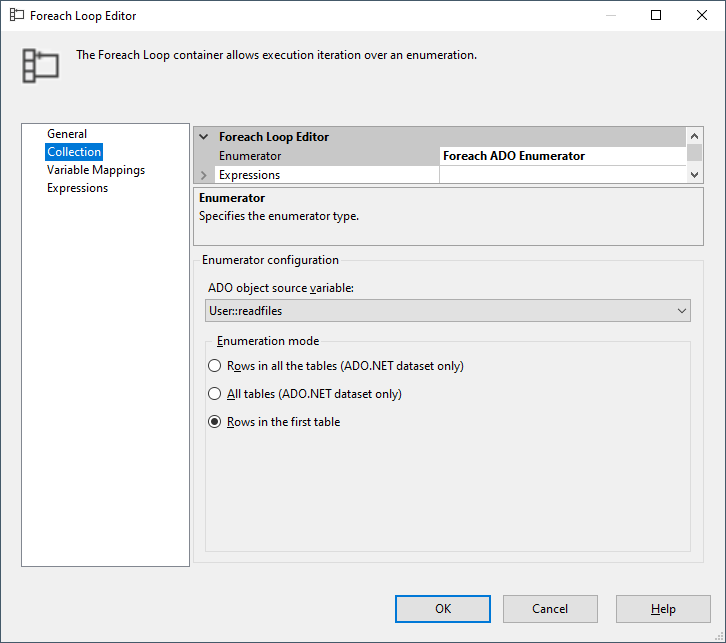

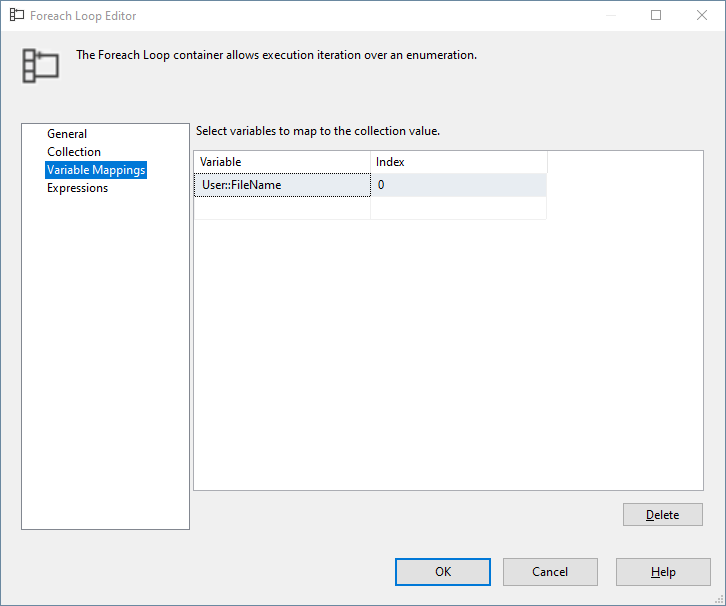

Now, in the Control flow task, drag and drop an SSIS Foreach loop container, which can be configured as shown below, to accept the Recordset in the object variable in each iteration.

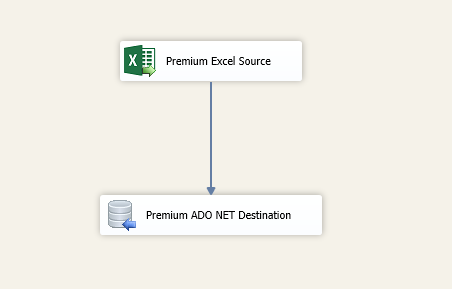

Create another string variable @[User:FileName] and the Index "0" is assigned to it so that it gets the file name from each record in the Recordset from the object variable. Drag and drop another Data flow task inside the Foreach loop container - "Read the Excel data from each file" - which will have the Premium Excel Source component.

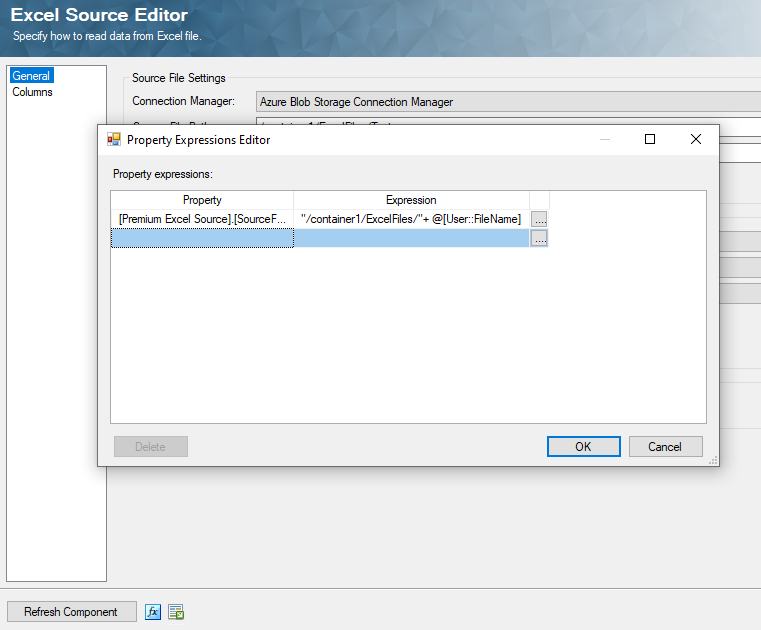

The Source file path property in the Premium Excel Source component is parameterized to have the variable @[User:FileName] appended to the folder path in it, which will get the file name from the Foreach loop iteration.

With this setup, the Source file Path property gets populated with the file names that are pulled in from the Azure Blob storage location folder path. And this is connected to a Premium ADO.NET Destination component, in which it is mapped to a table to write the values as it comes in.

Sample package

We have a sample package that can get you started with this design, which you can download from this link. The connection managers are stored with generic details, so please feel free to replace them with your own credentials. The package can be added to a project of your own to open and give it a try.

Conclusion

In short, when you run the package, the variable @[User::CurrDate] will have the latest data in the required format, which will then be evaluated against the Name property to get a list of files. This record set will in turn be stored into an object variable @[User:readfiles]. In the Foreach loop iterations, the file names will be assigned one by one to the string variable, @[User:FileName],which is then used in the Source File Path property in the Premium Excel Source component inside the loop.